Split testing is just a simple way to compare two versions of something—a webpage, an email, or even an SMS message—to see which one actually works better. Think of it like a friendly competition: Version A (your original) goes head-to-head with Version B (your new idea). The winner is decided by a specific goal, like getting more clicks or making more sales.

Understanding the Core Idea of Split Testing

Let’s say you own a bakery and want to sell more cookies. You could bake one batch using your classic recipe (Version A) and a second batch with extra chocolate chips (Version B). You offer free samples of both and see which one people buy more of. That’s a split test in a nutshell! It’s a straightforward, powerful method for making decisions based on real customer behavior instead of just guessing what they want.

This exact same idea applies in the digital world. Version A is your original, which we often call the “control,” and Version B is the new variation you want to test. The key is to change only one thing at a time so you can measure its direct impact. This data-first approach lets you fine-tune your marketing one improvement at a time.

How It Works in Practice

The process is pretty simple: you show each version to a similar-sized group of people and track a specific outcome. By looking at the data, you can see which version helps you hit your goals more effectively. It’s a foundational concept in marketing that actually has its roots in randomized controlled trials from the 1920s.

The visual below shows how this works for a website, splitting traffic between the control and the new variation to see which one performs best.

This method ensures you can confidently attribute any difference in user behavior directly to the change you made. Ultimately, split testing turns optimization from an art form into a science, giving you clear proof of what your audience responds to. Understanding these interactions gives you incredible insight into your audience, which is a key part of what we cover in our guide on behavioral analytics.

Why Split Testing Is a Non-Negotiable for Growth

Let’s move past the theory. Split testing is where your business starts to build real, tangible momentum. Think of it less as a neat experiment and more as a fundamental engine for growth. Why? Because it systematically replaces guesswork with hard data, turning your website and marketing into finely-tuned, revenue-generating machines.

Instead of crossing your fingers and hoping a new design works, testing lets you prove that changes are for the better before you roll them out to everyone. This simple step minimizes the risk of a new message or layout accidentally tanking your performance.

By testing, you gain undeniable proof of what your audience truly wants, not just what you think they want. This insight is the foundation for making smarter business decisions that lead directly to more engagement and higher revenue.

And the impact isn’t small. Just look at the software company Hubstaff. They ran a focused split test on their homepage and walked away with a massive 49% increase in sign-ups. This is the kind of incredible return you get when you build a culture around testing. It’s a common story for a reason. You can find more strategies for improving website conversion rates in our detailed guide.

From Guesswork to Guaranteed Wins

Relying on intuition alone is like navigating without a map—you might get lucky, but you’re more likely to get lost. Split testing is the data-driven compass that points you toward meaningful improvements. The best part is that every single test, whether it wins or loses, teaches you something valuable about how your customers think and act.

This continuous feedback loop helps you to:

- Make informed decisions based on actual user data, not just opinions.

- Understand customer preferences on a deeper level, from headlines that grab attention to offers they can’t refuse.

- Systematically improve key metrics like conversion rates and average order value.

The global market for A/B testing tools is expected to hit about USD 850.2 million in 2024, and the most successful companies run dozens of tests every year. This massive investment highlights a critical shift in business: top performers don’t guess, they test. By embracing this process, you create a powerful engine for consistent, predictable growth.

Where to Find Your Biggest Testing Opportunities

So, you understand what split testing is and why it’s a big deal. That’s the first step. The real question is, where do you start? Where should you focus your energy to see the biggest possible impact on your bottom line?

It’s tempting to jump in and start testing small things, like the color of a button on your checkout page. But the truth is, the most significant wins rarely come from these tiny tweaks. Instead, they come from testing the more fundamental elements of your user’s experience.

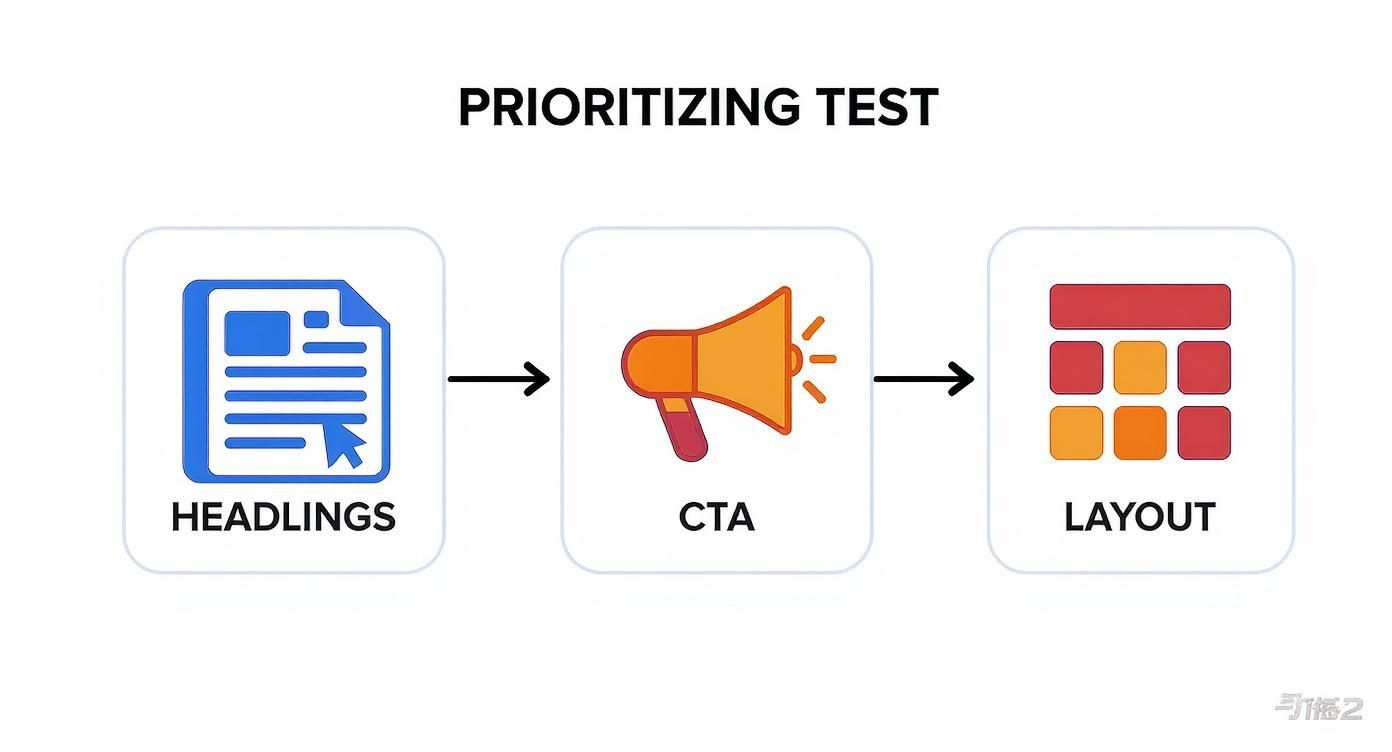

Prioritizing is everything. Not all tests are created equal—some have the potential to deliver massive gains, while others might just nudge the needle a tiny bit. If you focus on the high-impact areas first, you’ll get way more value out of every single experiment you run.

The goal here is to move beyond minor adjustments and test the core components that actually influence a user’s decision-making. Think less about colors and more about clarity, value, and motivation.

High-Impact Areas To Test

To find your biggest opportunities, look for the elements that directly shape how users see your offer and what motivates them to act. These are the changes most likely to produce substantial, game-changing results.

Here are some of the most powerful places to kick off your split testing journey:

- Headlines and Value Propositions: This is the first thing a visitor reads, and it can make or break their entire experience. Test different ways of communicating your core benefit to see what message truly clicks with your audience.

- Calls-to-Action (CTAs): Don’t just test the color—test the words. A simple change from “Learn More” to “Get Your Free Guide” can dramatically alter user behavior by setting much clearer expectations.

- Page Layout and Design: While a full redesign is a huge project, testing different layouts can uncover massive improvements in how users navigate your site. This is a crucial part of learning how to optimize landing pages for maximum effect.

- Offers and Promotions: The offer itself is a huge lever you can pull. Test variations like “20% Off” versus “Free Shipping” or a free trial versus a money-back guarantee. You might be surprised by what truly motivates your buyers.

Of course, your website isn’t the only place to find these opportunities. The same principles apply to your advertising. In fact, a crucial area for finding big wins is in optimizing B2B LinkedIn ad campaigns for high conversions). Testing headlines, copy, and offers works just as effectively there as it does on your own site.

To help you prioritize, think about testing in terms of potential impact. Some changes are small bets, while others are big swings that can lead to home runs.

Common Split Test Elements and Their Potential Impact

| Test Element | Example Variation | Typical Impact Level |

|---|---|---|

| Headline/Value Proposition | Testing a benefit-driven headline vs. a feature-focused one. | High |

| Offers & Promotions | “20% Off” vs. “Free Shipping on Orders Over $50”. | High |

| Page Layout | Single-column vs. multi-column design. | High |

| Call-to-Action (CTA) Text | “Start My Free Trial” vs. “Sign Up Now”. | Medium |

| Images and Videos | Using a product video instead of a static image. | Medium |

| Form Fields | Asking for 3 fields vs. 6 fields in a lead form. | Medium |

| Button Color | A green “Add to Cart” button vs. a blue one. | Low |

| Font Size/Style | Changing the body text from Arial to Times New Roman. | Low |

As you can see, focusing on the “High” impact elements first is the fastest way to see meaningful results. Once you’ve optimized the big stuff, you can then move on to refining the smaller details.

A Simple Framework for Running Your First Split Test

Getting started with split testing can feel like a huge undertaking, but it all boils down to a clear, repeatable process. Think of it as your playbook for turning hunches and smart ideas into hard data that actually improves your business. Having a solid framework means your results will be clean, trustworthy, and lead to real growth.

The whole thing kicks off with one simple but critical question: What are you trying to improve? Every single test needs a clear, measurable goal. This could be anything from boosting sign-ups and lowering cart abandonment to getting more demo requests. This goal is your North Star—it guides every decision you make from here on out.

Once your goal is set, you need a hypothesis. This is just an educated guess about what change will get you closer to that goal. A great hypothesis is always built on a real problem you’ve identified. For example: “Changing the CTA button from ‘Learn More’ to ‘Get Your Free Guide’ will increase form fills because it offers more tangible, immediate value.”

The Core Steps of a Split Test

With a solid hypothesis in hand, you’re ready to bring your test to life. This is where you’ll build your new version and start gathering the data that will either prove or disprove your initial idea. The key here is to keep it simple.

- Create the Variation: Build the new version (let’s call it Version B) that reflects your hypothesis. Stick to the golden rule: change only one element at a time. If you tweak the headline and the button color, you’ll never know which change actually made the difference.

- Split Your Audience: Your testing tool will automatically and randomly divide your traffic. One half sees the original (Version A), and the other half sees your new variation (Version B). This random split is what makes it a fair fight.

- Run the Test: Let it run long enough to hit statistical significance. That’s a fancy way of saying you have enough data to be confident the results aren’t just a random fluke. Depending on your traffic, this might take a few days or even a few weeks.

This infographic gives you a great starting point for what to test first to see the biggest impact.

As you can see, foundational elements like headlines and calls-to-action almost always deliver bigger wins than minor design tweaks. For a deeper look into boosting your website’s performance, check out these proven conversion rate optimization tips.

Finally, it’s time to analyze the results. Did your new version win? Awesome—roll out the change! If it didn’t, the test is still a success. You just learned what doesn’t work, saving you from making a bad decision that could have cost you money. For those looking to dive deeper and move faster, you might find resources like an AI-powered A/B testing guide helpful.

How to Actually Understand Your Test Results

Launching a split test is the easy part. The real trick is figuring out if your results point to a genuine win or if it’s all just random noise. This is where statistical significance comes in, and it’s what separates guessing from knowing.

Think of it like flipping a coin. If you flip it ten times and get seven heads, you might think the coin is rigged. But it’s probably just a fluke. Now, if you flip it 1,000 times and get 700 heads, you can be pretty darn sure something’s up. Split testing is the same; you need enough data—a large enough sample size—to trust the outcome.

One of the most common mistakes is calling a winner the moment one version inches ahead. Early results can be incredibly misleading. You have to let the test run its course to gather enough data and be sure the outcome isn’t just a coincidence.

Looking Beyond the Overall Winner

A truly useful analysis goes deeper than just crowning a champion. Knowing Version B beat Version A is great, but the real gold is understanding why. This means you need to slice and dice your results to see how different groups of people behaved.

For example, you might find that your flashy new design worked wonders with mobile users but actually tanked conversions on desktop. An insight like that is far more valuable than just knowing the “overall winner.” Digging into these segments helps you truly understand what makes your audience tick.

Statistical rigor is key in split testing. As a rule of thumb, most in the industry aim for a confidence level of 90% or higher before they’ll trust the results enough to make a change. High-traffic sites can hit this mark quickly, while smaller stores might need to be more patient and let tests run longer.

From Data to Actionable Insights

At the end of the day, understanding test results is about more than just staring at numbers on a dashboard. It’s about turning that data into smart decisions that actually grow your business. This is a fundamental part of learning how to measure marketing campaign effectiveness the right way.

To make decisions you can stand behind, focus on these takeaways:

- Wait for Significance: Don’t jump the gun. Let your testing tool tell you when the numbers are solid enough to be trusted.

- Segment Your Data: Always look at how different groups responded. Check device types, where traffic came from, or new versus returning visitors.

- Learn from Losses: A “failed” test is never a waste of time. It tells you something valuable about what your audience doesn’t like, which is just as important as knowing what they do.

Common Questions About Split Testing

Once you start dipping your toes into testing, you’re bound to have some questions. It’s totally normal. Getting a handle on these details is what separates a frustrating, wheel-spinning effort from a real, money-making optimization program.

Let’s clear up a few of the most common ones so you can get your tests running with confidence.

Split Testing vs. Multivariate Testing

You’ll hear these two thrown around, sometimes even used for the same thing, but they’re actually different tools for different jobs. Knowing which one to grab is key.

Think of it this way:

Split testing (or A/B testing) is like a bake-off between two completely different cake recipes. You bake Recipe A, then you bake Recipe B, and you see which one people like more. Simple. You’re testing one entire version against another.

Multivariate testing is more like trying to perfect a single recipe by testing different combinations of ingredients. You might try three kinds of flour against two kinds of sugar to find the absolute best mix. It’s great for fine-tuning lots of little things at once, but it needs a ton of traffic to work.

For most stores, especially when you’re just starting, A/B split testing is your best friend. It’s simpler, gives you faster answers, and is perfect for testing big, bold changes that can actually move the needle.

How Long Should I Run a Split Test?

This is the big one, and the answer isn’t “for a week” or “for a month.” The real answer is: until you reach statistical significance. How long that takes depends entirely on your traffic and your conversion rate.

A big-name store with tons of visitors might get a solid result in three days. A smaller, niche shop might need three weeks to gather enough data. The biggest mistake you can make is stopping a test early just because one version looks like it’s winning.

Your testing software does all the complicated math for you. It’ll tell you when you have enough data to trust the result. The main takeaway here? Trust the math, not the calendar.

Can I Run a Split Test with Low Traffic?

Absolutely. You just have to change your game plan.

With low traffic, getting to statistical significance takes a lot longer. That means you can’t waste time testing tiny tweaks. If your audience is smaller, you have to swing for the fences with big, high-impact changes.

- Don’t test button colors. The difference is just too small to show up in a limited data set.

- Do test a completely different headline. This can totally reframe your offer in a customer’s mind.

- Do test a different core offer. Pit “20% Off” against “Free Shipping” and see what really motivates your buyers.

- Do test a totally redesigned page layout. A fresh user experience can cause a massive shift in how people behave.

The goal is to test a change so bold that it creates a result you can’t miss, even with a smaller crowd.

Ready to turn abandoned carts into profit? CartBoss uses the power of SMS to recover lost sales and boost your revenue by up to 50% on autopilot. Integrate seamlessly with your store and watch your conversions climb. Learn more at CartBoss.io.